WebSocket Patterns in Practice: Building Real-Time Notifications from Scratch

I needed real-time notifications and dove into WebSockets. From HTTP polling to Supabase Realtime—practical patterns for real-time communication.

I needed real-time notifications and dove into WebSockets. From HTTP polling to Supabase Realtime—practical patterns for real-time communication.

HTTP is Walkie-Talkie (Over). WebSocket is Phone (Hello). The secret tech behind Chat and Stock Charts.

Once you ship a public API, you can't change it freely. Compare four versioning strategies for evolving APIs without breaking clients, plus analysis of real-world choices by GitHub, Stripe, and Twilio.

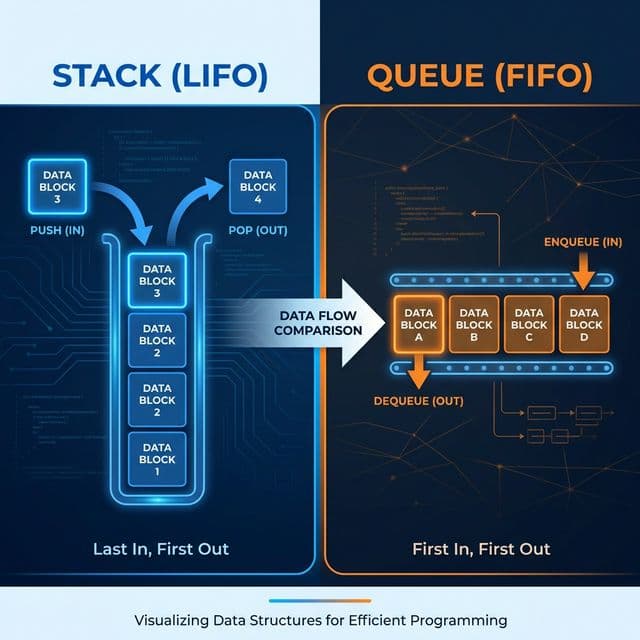

Pringles can (Stack) vs Restaurant line (Queue). The most basic data structures, but without them, you can't understand recursion or message queues.

Why did Facebook ditch REST API? The charm of picking only what you want with GraphQL, and its fatal flaws (Caching, N+1 Problem).

I was adding notifications to a side project. The classic kind: someone comments on your post, a badge pops up, you see the message. Simple enough on the surface.

But then I hit the wall. HTTP doesn't work that way. The server can't reach out first. Clients make requests, servers respond. That's the deal.

HTTP is sending letters. You write a letter (request), drop it in the mailbox, and wait for a reply (response). The server can't just knock on your door because something interesting happened. The mailman only walks one direction.

So I started researching the options. There turned out to be more than I expected.

The naive solution was to just ask the server repeatedly: "Any new notifications?" every few seconds.

function startPolling(userId: string) {

const interval = setInterval(async () => {

const response = await fetch(`/api/notifications?userId=${userId}`);

const { notifications } = await response.json();

if (notifications.length > 0) {

showNotifications(notifications);

}

}, 5000);

return () => clearInterval(interval);

}

It works. Notifications appear. But it's checking the mailbox 17,000 times a day when the mailman only comes once. With 100 users polling every 5 seconds, that's 1,200 requests per minute — most of them returning "nothing new." Up the frequency for better UX, multiply the server load proportionally.

Instead of checking every 5 seconds, hold the connection open until the server has something to say. The server doesn't respond until a new event occurs. When it does respond, the client immediately opens a new request.

Standing at the mailbox waiting instead of walking back and forth. Better than constant polling — fewer wasteful requests. But it's still HTTP request-response mechanics underneath, which means timeout handling, retry logic, and connection management get complicated fast.

SSE (Server-Sent Events) establishes a one-way stream over HTTP. The client connects once, and the server pushes events as they happen using the browser's built-in EventSource API.

SSE is a radio broadcast. Tune in (connect) and the station plays music (sends events) as long as you're listening. You don't have to ask "what's playing now?" — it just comes through. The catch: the listener can't broadcast back. It's one-way.

For notifications — a server-to-client use case — SSE is actually a solid fit. No library needed. HTTP/2 multiplexes multiple SSE connections over a single TCP connection. Works in serverless environments. The limitation is that the client can't send data back through the same channel.

WebSocket starts with an HTTP handshake, then upgrades to a full-duplex persistent connection. Both sides can send messages at any time.

WebSocket is a phone call. Once connected, server and client can talk simultaneously. The server says "someone just commented on your post" and the client hears it instantly. The client says "mark that as read" and the server hears it instantly. Neither has to wait for the other to finish.

| Approach | Direction | Overhead | Complexity | Best For |

|---|---|---|---|---|

| Polling | Client→Server | High | Low | Simple status checks |

| Long Polling | Client→Server | Medium | Medium | Low-frequency notifications |

| SSE | Server→Client | Low | Low | Notifications, feeds, dashboards |

| WebSocket | Bidirectional | Low | High | Chat, collaboration, games |

WebSocket infrastructure is non-trivial. Connection management, reconnection logic, scaling, serverless limitations. Since I was already on Supabase, I looked at their Realtime feature first.

Supabase Realtime broadcasts database changes to clients via WebSocket. It hooks into PostgreSQL's logical replication — when rows are inserted, updated, or deleted, subscribed clients get notified immediately.

The setup is nearly zero:

import { createClient } from '@supabase/supabase-js';

const supabase = createClient(

process.env.NEXT_PUBLIC_SUPABASE_URL!,

process.env.NEXT_PUBLIC_SUPABASE_ANON_KEY!

);

function subscribeToNotifications(userId: string, onNew: (notification: Notification) => void) {

const channel = supabase

.channel(`notifications:${userId}`)

.on(

'postgres_changes',

{

event: 'INSERT',

schema: 'public',

table: 'notifications',

filter: `user_id=eq.${userId}`,

},

(payload) => {

onNew(payload.new as Notification);

}

)

.subscribe((status) => {

if (status === 'SUBSCRIBED') {

console.log('Realtime subscription active');

}

});

return () => {

supabase.removeChannel(channel);

};

}

Insert a row into the notifications table from anywhere — another client, a server function, a trigger — and every subscribed user gets the event in real time. No separate WebSocket server. No scaling configuration. The React hook looks like this:

function NotificationBell({ userId }: { userId: string }) {

const [notifications, setNotifications] = useState<Notification[]>([]);

useEffect(() => {

const unsubscribe = subscribeToNotifications(userId, (notification) => {

setNotifications((prev) => [notification, ...prev]);

toast(`New notification: ${notification.message}`);

});

return unsubscribe;

}, [userId]);

return (

<button className="relative">

<BellIcon />

{notifications.length > 0 && (

<span className="badge">{notifications.length}</span>

)}

</button>

);

}

That's the whole implementation. For a side project on Vercel with Supabase already in the stack, this was the obvious answer. Most small teams don't need to go further.

When you need more control — custom protocols, server infrastructure you own, bidirectional communication beyond what Supabase Realtime offers — you handle WebSockets directly. The piece that took me the longest to get right was reconnection.

WebSocket connections drop. Unstable networks, server restarts, clients going offline briefly. Just showing "connection lost" is bad UX. Auto-reconnect is expected. But naive reconnection — immediately retrying on disconnect — creates a thundering herd when a server restarts and thousands of clients try to reconnect simultaneously.

Exponential backoff with jitter is the solution:

class ReliableWebSocket {

private ws: WebSocket | null = null;

private reconnectAttempts = 0;

private maxReconnectAttempts = 5;

private reconnectTimer: ReturnType<typeof setTimeout> | null = null;

private isIntentionallyClosed = false;

constructor(

private url: string,

private onMessage: (data: unknown) => void,

private onStatusChange?: (status: 'connected' | 'disconnected' | 'reconnecting') => void

) {

this.connect();

}

private connect() {

this.ws = new WebSocket(this.url);

this.ws.onopen = () => {

this.reconnectAttempts = 0;

this.onStatusChange?.('connected');

};

this.ws.onmessage = (event) => {

try {

const data = JSON.parse(event.data);

this.onMessage(data);

} catch {

console.error('Message parse error:', event.data);

}

};

this.ws.onclose = () => {

if (this.isIntentionallyClosed) return;

this.onStatusChange?.('disconnected');

this.scheduleReconnect();

};

}

private scheduleReconnect() {

if (this.reconnectAttempts >= this.maxReconnectAttempts) return;

// Exponential backoff: 1s, 2s, 4s, 8s, 16s (max 30s)

const delay = Math.min(1000 * Math.pow(2, this.reconnectAttempts), 30000);

// Jitter: spread reconnects across time to avoid thundering herd

const jitter = Math.random() * 1000;

this.onStatusChange?.('reconnecting');

this.reconnectAttempts++;

this.reconnectTimer = setTimeout(() => {

this.connect();

}, delay + jitter);

}

send(data: unknown) {

if (this.ws?.readyState === WebSocket.OPEN) {

this.ws.send(JSON.stringify(data));

}

}

close() {

this.isIntentionallyClosed = true;

if (this.reconnectTimer) clearTimeout(this.reconnectTimer);

this.ws?.close();

}

}

Two things make this work:

Exponential backoff: Retry intervals grow — 1s, 2s, 4s, 8s, 16s. If the server is down, clients back off instead of hammering it. The server gets breathing room to restart.

Jitter: Random noise added to each delay. Without it, clients that disconnected at the same moment retry at the same moment. A coordinated retry wave can be as bad as the original load. Jitter spreads them out.

Vercel, Netlify, AWS Lambda — functions execute per-request and terminate. No persistent TCP connections. WebSocket servers require long-lived processes. The options for serverless real-time:

This was a direct reason I chose Supabase Realtime on my Next.js/Vercel project. There's no good path to running a WebSocket server on the platform.

Firewalls and proxies close idle connections — especially on mobile networks. A WebSocket connection can appear open on both ends while actually being dead in the middle. Fix this with periodic pings: client sends ping every 30 seconds, server responds with pong. If no pong arrives within a timeout window, treat the connection as dead and reconnect.

The WebSocket protocol has built-in ping/pong frames, but many implementations also handle this at the application layer for more control.

Events that arrive while disconnected are missed. On reconnect, you need to decide: fetch missed events (pass the last received timestamp to the server) or accept the gap. Either way, surface connection status to the user. A small "Reconnecting..." indicator beats silent failure every time. Users who don't know they're offline will assume your app is broken.

Match the tool to the communication pattern. One-way notifications → SSE or Supabase Realtime. Bidirectional interaction → WebSocket. Infrequent updates → polling is fine.

Supabase Realtime removes most of the infrastructure problem. If you're already on Supabase, database-triggered real-time events require almost no setup. This covers the majority of small-project use cases.

Reconnection logic is not optional. Any WebSocket implementation without exponential backoff and jitter is incomplete. Networks fail. Handle it gracefully.

Serverless and persistent connections don't mix. Know your deployment environment before choosing an approach. WebSocket servers need long-lived processes.

Show connection state to users. Silent failure — where the app appears to work but stopped receiving events — is worse than an explicit "reconnecting" message.

I started this wanting to spend a weekend on notifications. I ended up understanding the whole spectrum of real-time communication patterns. The Supabase Realtime integration took about an hour. The reconnection logic I wrote for the raw WebSocket case took most of a day. Both were worth understanding. Knowing when to reach for the managed solution versus the custom one is the actual skill.